SeamPose Powered by XIAO nRF52840, Repurposing Seams for Upper-Body Pose Tracking with Smart Clothing

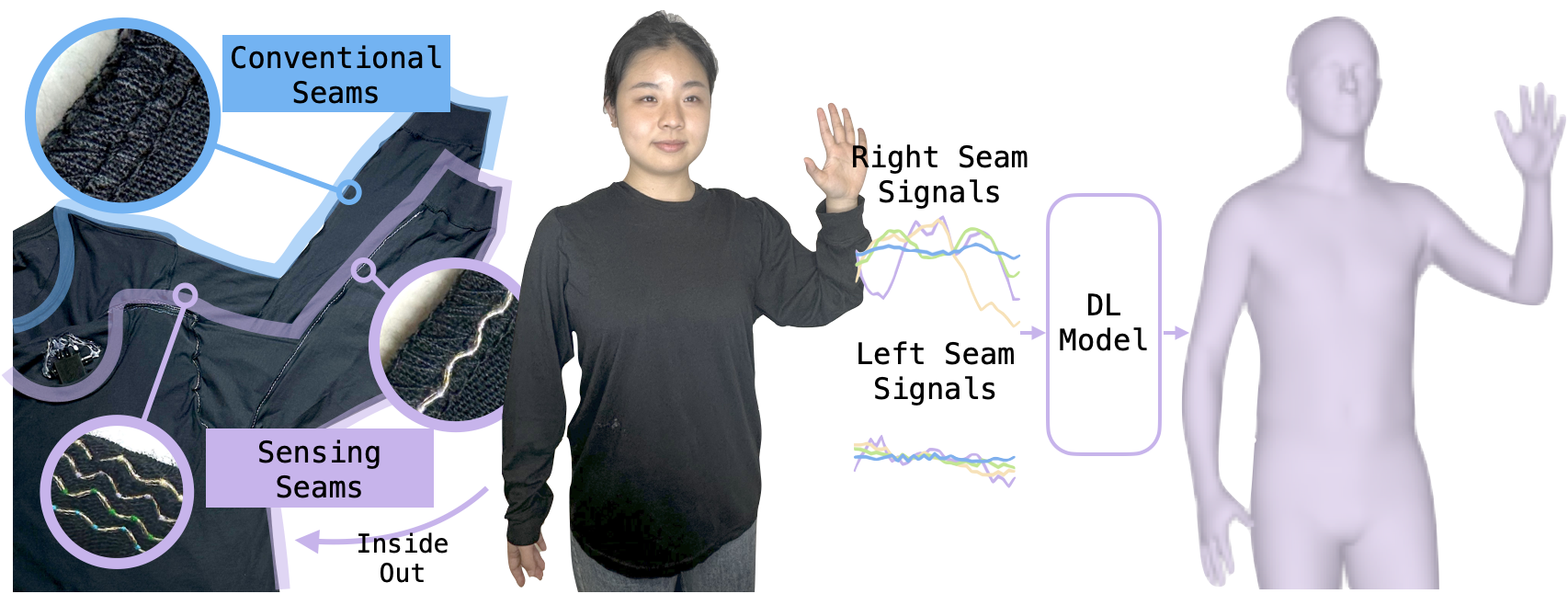

Imagine turning your everyday clothes into smart motion-tracking tools. That’s exactly what a team of researchers at Cornell University has achieved with SeamPose. This innovative project, led by Catherine Tianhong Yu and Professor Cheng Zhang uses conductive threads sewn over the seams of a shirt to transform it into an upper-body pose-tracking device by unleashing the power of XIAO nRF52840. Unlike traditional sensor-laden garments that change the clothing’s appearance and comfort, SeamPose blends seamlessly into everyday wear without compromising aesthetics or fit.

This project offers exciting potential applications in areas like health monitoring, sports analytics, AR/VR, and human-robot interaction by making wearable tracking more accessible and comfortable.

Hardwares

To build SeamPose, the following key hardware elements were used:

- Long-sleeve T-shirt with machine-sewn conductive thread along the seams.

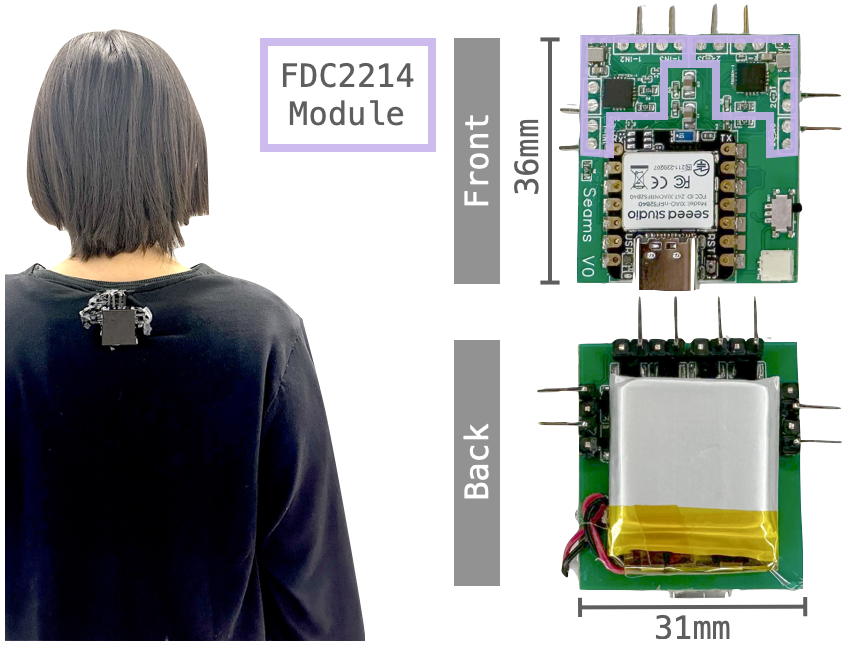

- Customized Sensing Board:

- XIAO nRF52840

- A 36x31mm board with two FDC2214 capacitance-to-digital converters

- 3.7V 290mAh LiPo battery

How SeamPose Works

SeamPose operates by transforming ordinary seams in a long-sleeve shirt into capacitive sensors, enabling real-time tracking of upper-body movements. Conductive threads, specifically insulated silver-plated nylon, are machine-sewn along key seams—such as the shoulders and sleeves—without altering the garment’s appearance or comfort. As the wearer moves, the seams stretch and shift, causing variations in their capacitance. These signals are captured by a customized sensing board integrated with two FDC2214 capacitance-to-digital converters and the XIAO nRF52840 microcontroller. The XIAO nRF52840 transmits the data wirelessly via Bluetooth Low Energy (BLE) to a nearby computer for processing.

The transmitted signals are fed into a deep learning model that maps the seam data to 3D joint positions relative to the pelvis. This allows the system to interpret complex upper-body movements using only eight sensors distributed symmetrically along the shirt. During testing, SeamPose achieved a mean per joint position error (MPJPE) of 6.0 cm, comparable to more invasive tracking systems. The XIAO nRF52840 ensures seamless real-time data transmission, making SeamPose a breakthrough in wearable technology by combining comfort with precise motion tracking.

Source: Cornell University Team

What’s Next for SeamPose?

SeamPose offers a glimpse into the future of smart clothing, where everyday garments become powerful tools for motion tracking without sacrificing comfort or design. However, challenges like real-world deployment considerations and smart-clothing manufacturing at scale need to be addressed for broader adoption. The research team plans to explore improved seam placement and enhanced sensor calibration for even more accurate tracking in the future.

If you’re excited about SeamPose and want to dive deeper, check out their paper on ACM Digital Library

End Note

Hey community, we’re curating a monthly newsletter centering around the beloved Seeed Studio XIAO. If you want to stay up-to-date with:

Cool Projects from the Community to get inspiration and tutorials

Cool Projects from the Community to get inspiration and tutorials Product Updates: firmware update, new product spoiler

Product Updates: firmware update, new product spoiler Wiki Updates: new wikis + wiki contribution

Wiki Updates: new wikis + wiki contribution News: events, contests, and other community stuff

News: events, contests, and other community stuff

Please click the image below to subscribe now!

to subscribe now!

The post SeamPose Powered by XIAO nRF52840, Repurposing Seams for Upper-Body Pose Tracking with Smart Clothing appeared first on Latest Open Tech From Seeed.