Maximizing Docker Desktop: How Signing In Unlocks Advanced Features

Docker Desktop is more than just a local application for containerized development — it’s your gateway to an integrated suite of cloud-native tools that streamline the entire development workflow. While Docker Desktop can be used without signing in, doing so unlocks the full potential of Docker’s powerful, interconnected ecosystem. By signing in, you gain access to advanced features and services across Docker Hub, Build Cloud, Scout, and Testcontainers Cloud, enabling deeper collaboration, enhanced security insights, and scalable cloud resources.

This blog post explores the full range of capabilities unlocked by signing in to Docker Desktop, connecting you to Docker’s integrated suite of cloud-native development tools. From enhanced security insights with Docker Scout to scalable build and testing resources through Docker Build Cloud and Testcontainers Cloud, signing in allows developers and administrators to fully leverage Docker’s unified platform.

Note that the following sections refer to specific Docker subscription plans. With Docker’s newly streamlined subscription plans — Docker Personal, Docker Pro, Docker Team, and Docker Business — developers and organizations can access a scalable suite of tools, from individual productivity boosters to enterprise-grade governance and security. Visit the Docker pricing page to learn more about how these plans support different team sizes and workflows.

Benefits for developers when logged in

Docker Personal

- Access to private repositories: Unlock secure collaboration through private repositories on Docker Hub, ensuring that your sensitive code and dependencies are managed securely across teams and projects.

- Increased pull rate: Boost your productivity with an increased pull rate from Docker Hub (40 pulls/hour per user), ensuring smoother, uninterrupted development workflows without waiting on rate limits. The rate limit without authentication is 10 pulls/hour per IP.

- Docker Scout CLI: Leverage Docker Scout to proactively secure your software supply chain with continuous security insights from code to production. By signing in, you gain access to powerful CLI commands that help prevent vulnerabilities before they reach production.

- Build Cloud and Testcontainers Cloud: Experience the full power of Docker Build Cloud and Testcontainers Cloud with free trials (7-day for Build Cloud, 30-day for Testcontainers Cloud). These trials give you access to scalable cloud infrastructure that speeds up image builds and enables more reliable integration testing.

Docker Pro/Team/Business

For users with a paid Docker subscription, additional features are unlocked.

- Unlimited pull rate: No Hub rate limit will be enforced for users with a paid subscription plan.

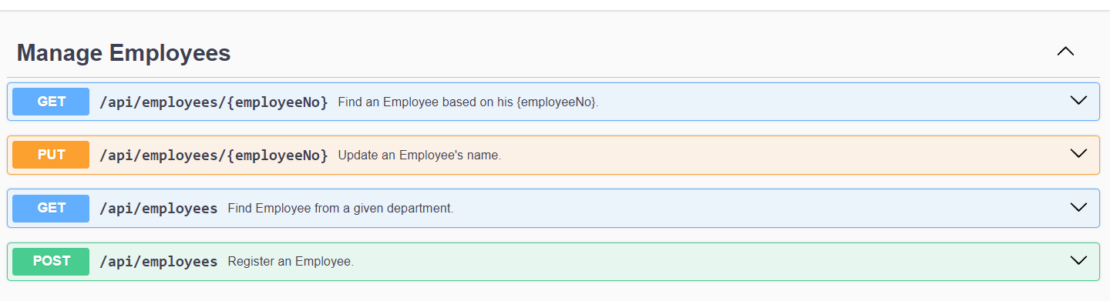

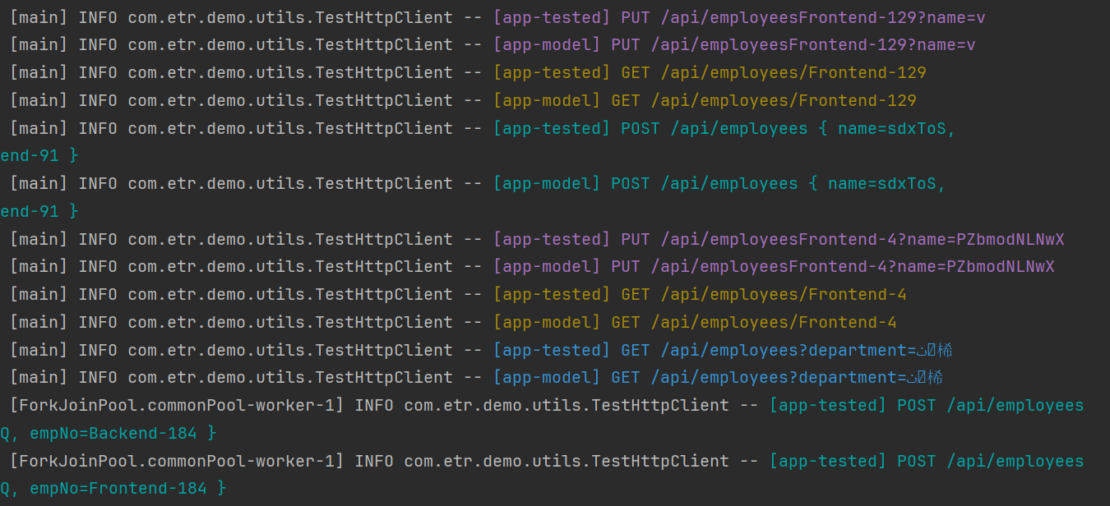

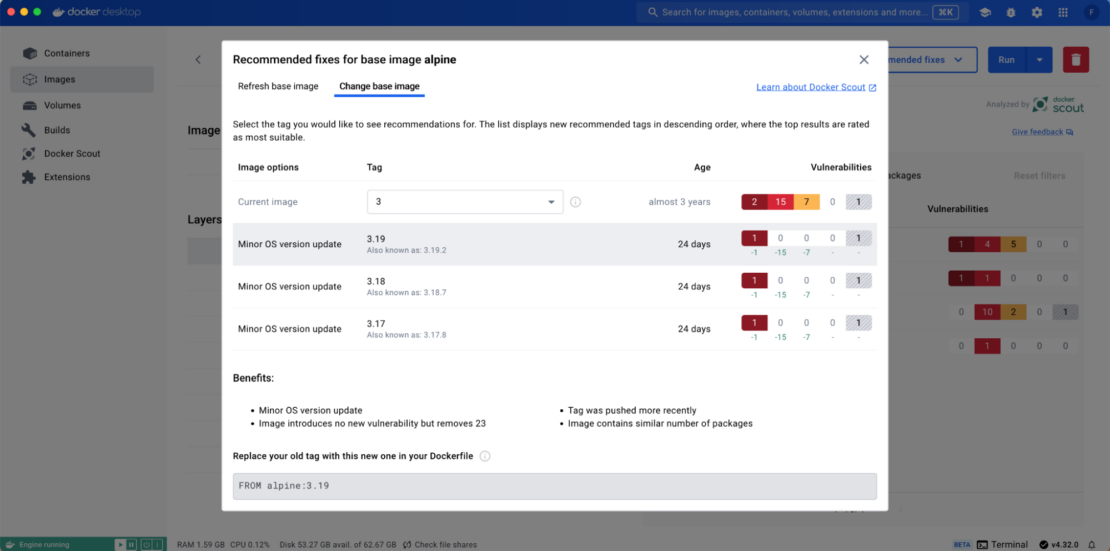

- Docker Scout base image recommendations: Docker Scout offers continuous recommendations for base image updates, empowering developers to secure their applications at the foundational level and fix vulnerabilities early in the development lifecycle.

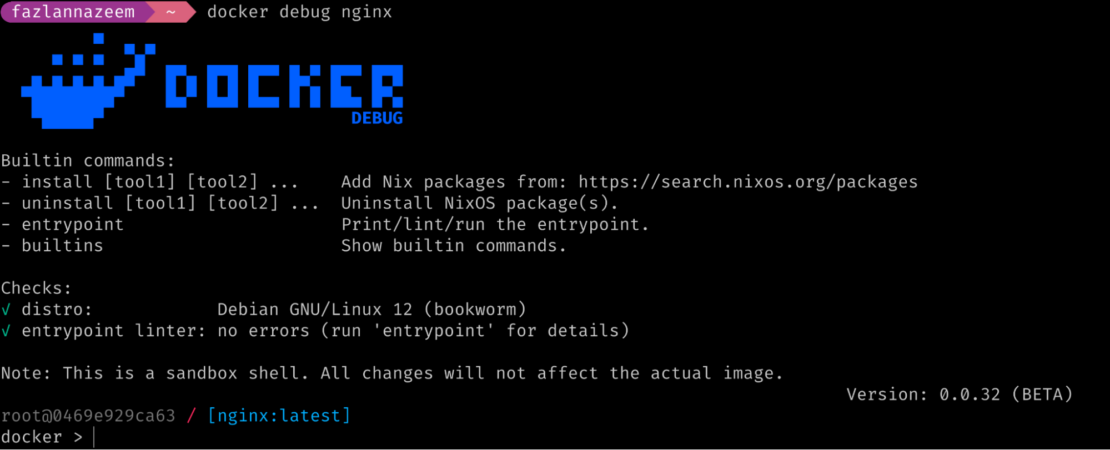

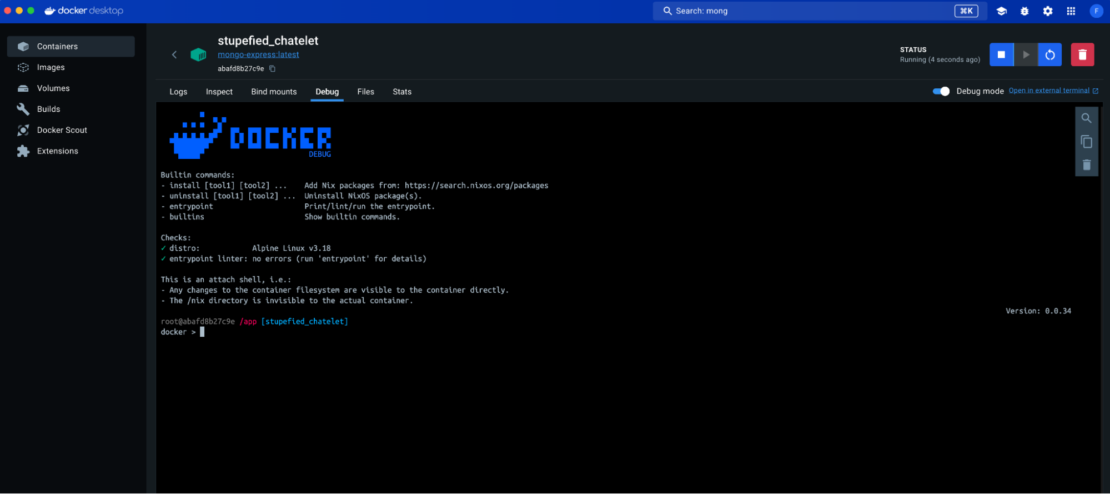

- Docker Debug: The docker debug CLI command can help you debug containers, while the images contain the minimum required to run your application.

Docker Debug functionalities have also been integrated into the container view of the Docker Desktop UI.

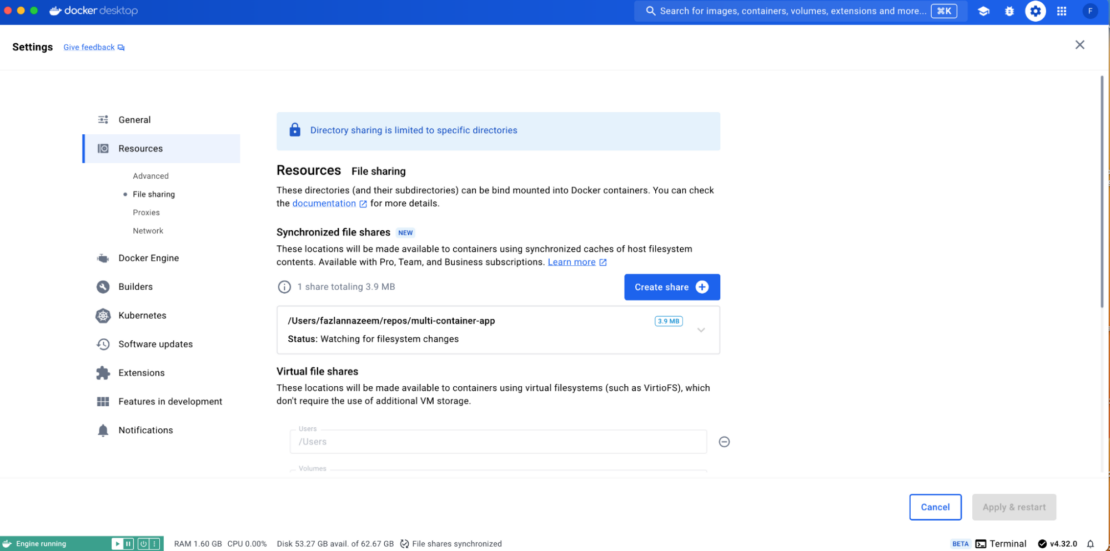

- Synchronized file shares: Host to Docker Desktop VM file sharing via bind mounts can be quite slow for large codebases. Speed up your development cycle with synchronized file shares, allowing you to sync large codebases into containers quickly and efficiently without performance bottlenecks—helping developers iterate faster on critical projects.

- Additional free minutes for Docker Build Cloud: Docker Build Cloud helps developer teams speed up image builds by offloading the build process to the cloud. The following benefits are available for users depending on the subscription plan.

- Docker Pro: 200 mins/month per org

- Docker Team: 500 mins/month per org

- Docker Business: 1500 mins/month per org

- Additional free minutes for Testcontainers Cloud: Testcontainers Cloud simplifies the process for developers to run reliable integration tests using real dependencies defined in code, whether on their laptops or within their team’s CI pipeline. Depending on the subscription plan, the following benefits are available for users:

- Docker Pro: 100 mins/month per org

- Docker Team: 500 mins/month per org

- Docker Business: 1,500 mins/month per org

Benefits for administrators when your users are logged in

Docker Business

Security and governance

The Docker Business plan offers enterprise-grade security and governance controls, which are only applicable if users are signed in. As of Docker Desktop 4.35.0, these features include:

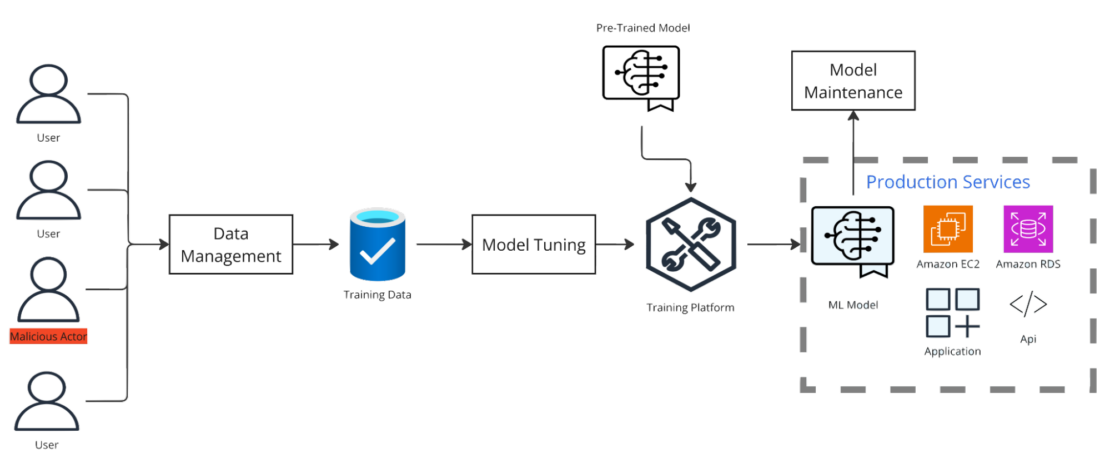

- Image Access Management and Registry Access Management: Enhance security governance by controlling exactly which images and registries your developers can access or push to, ensuring compliance with organizational security policies and minimizing risk exposure.

- Settings management: Enforce organization-wide security policies by locking default settings across Docker Desktop instances, ensuring consistent configuration and compliance throughout your team’s development environment.

- Enhanced Container Isolation: Protect developer environments from malicious containers with rootless containers and much more.

- Air-gapped containers: Restrict network activity originating from containers.

- Private extensions marketplace: Limit Docker Desktop extensions that developers can install.

- Kerberos and NTLM authentication for proxies: Centralize Docker Desktop authentication to network proxies without prompts.

- SOCKS5 proxy support: Enable the usage of SOCKS5 proxies for Docker Desktop.

License management

Tracking usage for licensing purposes can be challenging for administrators due to Docker Desktop not requiring authentication by default. By ensuring all users are signed in, administrators can use Docker Hub’s organization members list to manage licenses effectively.

This can be coupled with Docker Business’s Single Sign-On and SCIM capabilities to ease this process further.

Insights

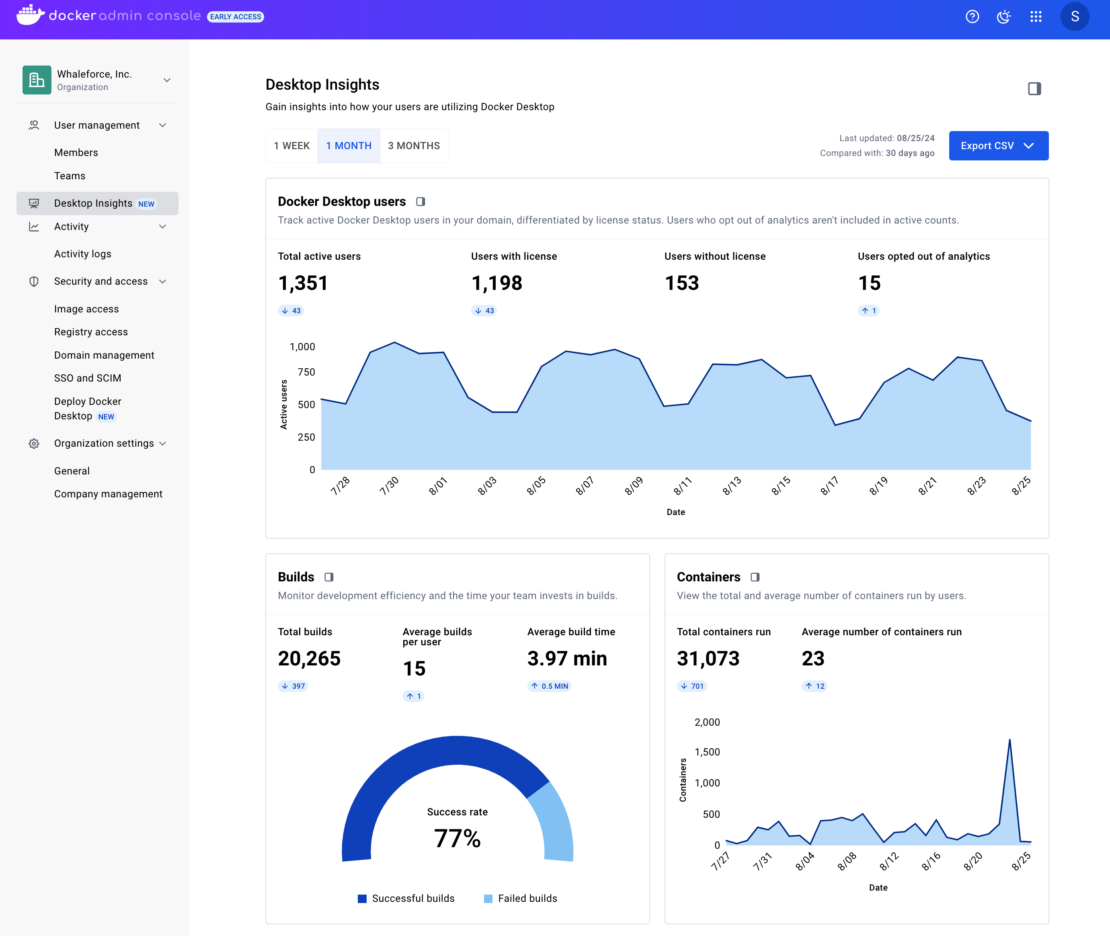

Administrators and other stakeholders (such as engineering managers) must comprehensively understand Docker Desktop usage within their organization. With developers signed into Docker Desktop, admins gain actionable insights into usage, from feature adoption to image usage trends and login activity, helping administrators optimize team performance and security. A dashboard offering insights is now available to simplify monitoring. Contact your account rep to enable the dashboard.

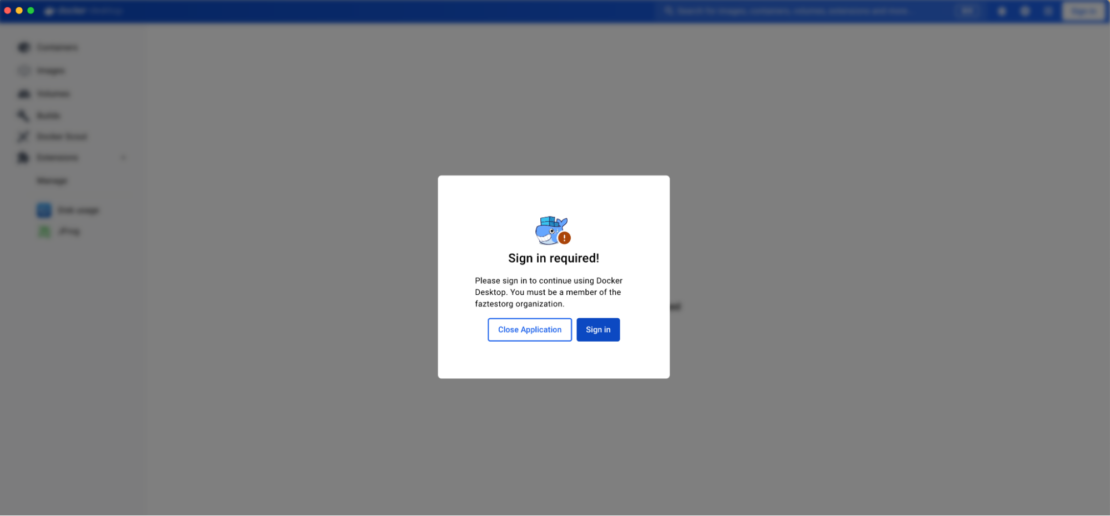

Enforce sign-in for Docker Desktop

Docker Desktop includes a feature that allows administrators to require authentication at start-up. Admins can ensure that all developers sign in to access Docker Desktop, enabling full integration with Docker’s security and productivity features. Sign-in enforcement helps maintain continuous compliance with governance policies across the organization.

Developers can then click on the sign-in button, which takes them through the authentication flow.

More information on how to enforce sign-in can be found in the documentation.

Unlock the full potential of Docker’s integrated suite

Signing into Docker Desktop unlocks significant benefits for both developers and administrators, enabling teams to fully leverage Docker’s integrated, cloud-native suite. Whether improving productivity, securing the software supply chain, or enforcing governance policies, signing in maximizes the value of Docker’s unified platform — especially for organizations using Docker’s paid subscription plans.

Note that new features are introduced with each new release, so keep an eye on our blog and subscribe to the Docker Newsletter for the latest product and feature updates.

Up next

- Read Announcing Upgraded Docker Plans: Simpler, More Value, Better Development and Productivity.

- Learn more about the new Docker subscriptions.

- Get started with Docker Desktop.

- Subscribe to the Docker Newsletter.